Let's cut through the AI agent noise.

Every week there's another "revolutionary" agent framework, another startup claiming their AI just replaced an entire department, another think-piece about AGI being six months away. Most of it is noise — and none of it is useful if you're actually trying to understand where this technology is going and what it means for real businesses.

So here's what's actually happening, backed by hard numbers from PwC, McKinsey, Gartner, GitHub, and other organizations that measure real-world adoption — not vibes. These are the trends that are driving investment decisions, changing hiring plans, and reshaping entire industries right now.

Top AI agent trends dominating 2026:

- Computer-Use & GUI Agents — Agents that operate software like humans, unlocking 85% of enterprise systems with no API

- Agentic Coding Takes Over Software Engineering — 76% of developers use AI coding tools; Cursor hit $500M ARR in under 2 years

- Reasoning Models Become Agent Backbones — o3 scores 87.5% on ARC-AGI, surpassing human baseline of 85%

- Enterprise Digital Workforce at Scale — 79% of companies are actively deploying AI agents; 171% projected ROI

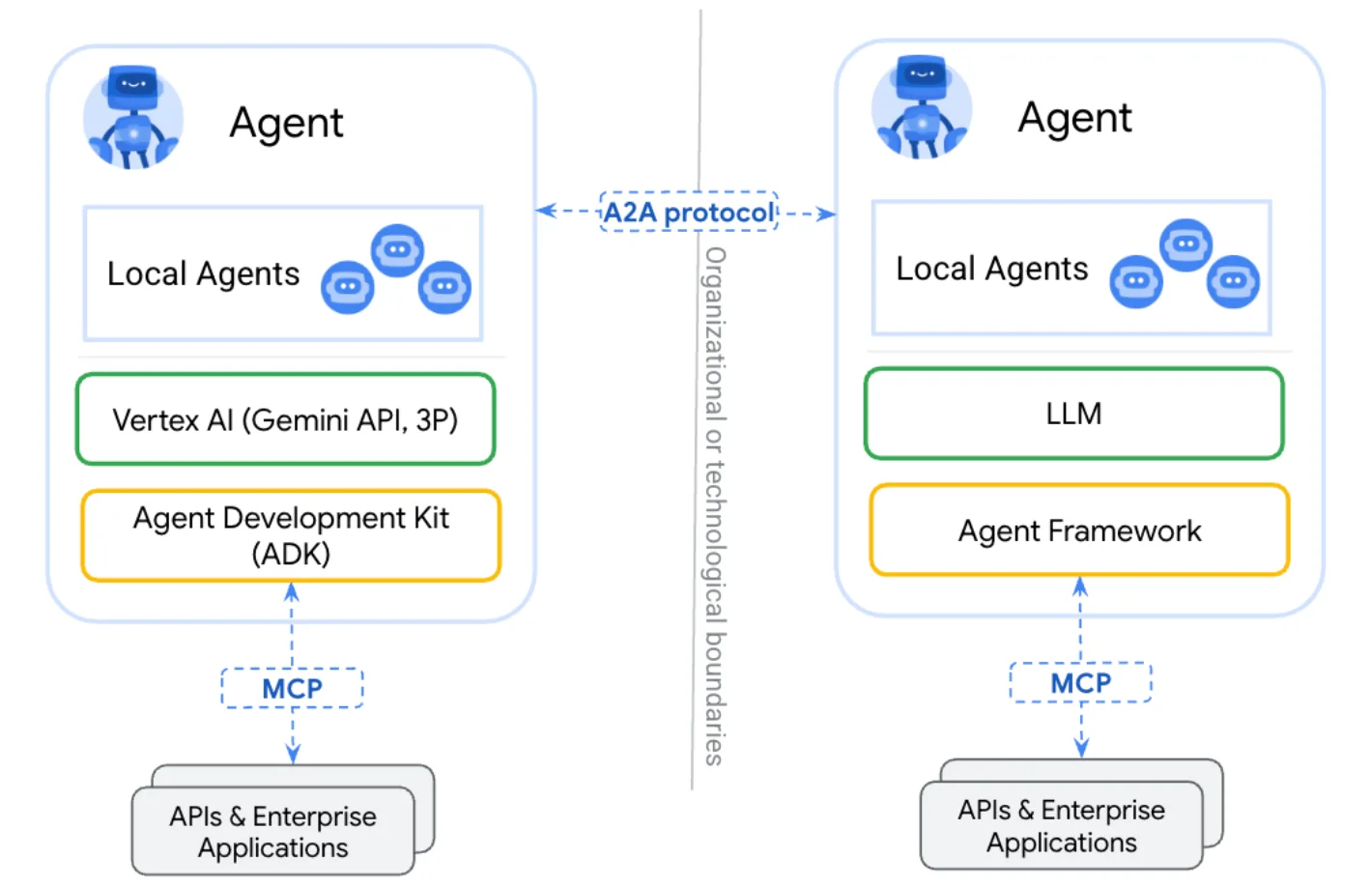

- MCP & Protocol Standardization — The TCP/IP moment for agents; OpenAI, Google, and Microsoft all adopted MCP in 2025

- Multiagent Orchestration Goes to Production — Teams of specialized agents replacing single-agent deployments

- Vertical Domain Agents — Harvey (legal), Abridge (healthcare), Kensho (finance) outperform generalist models on narrow tasks

- Persistent Memory & Lifelong Agent Identity — Agents that remember across sessions are creating irreplaceable user lock-in

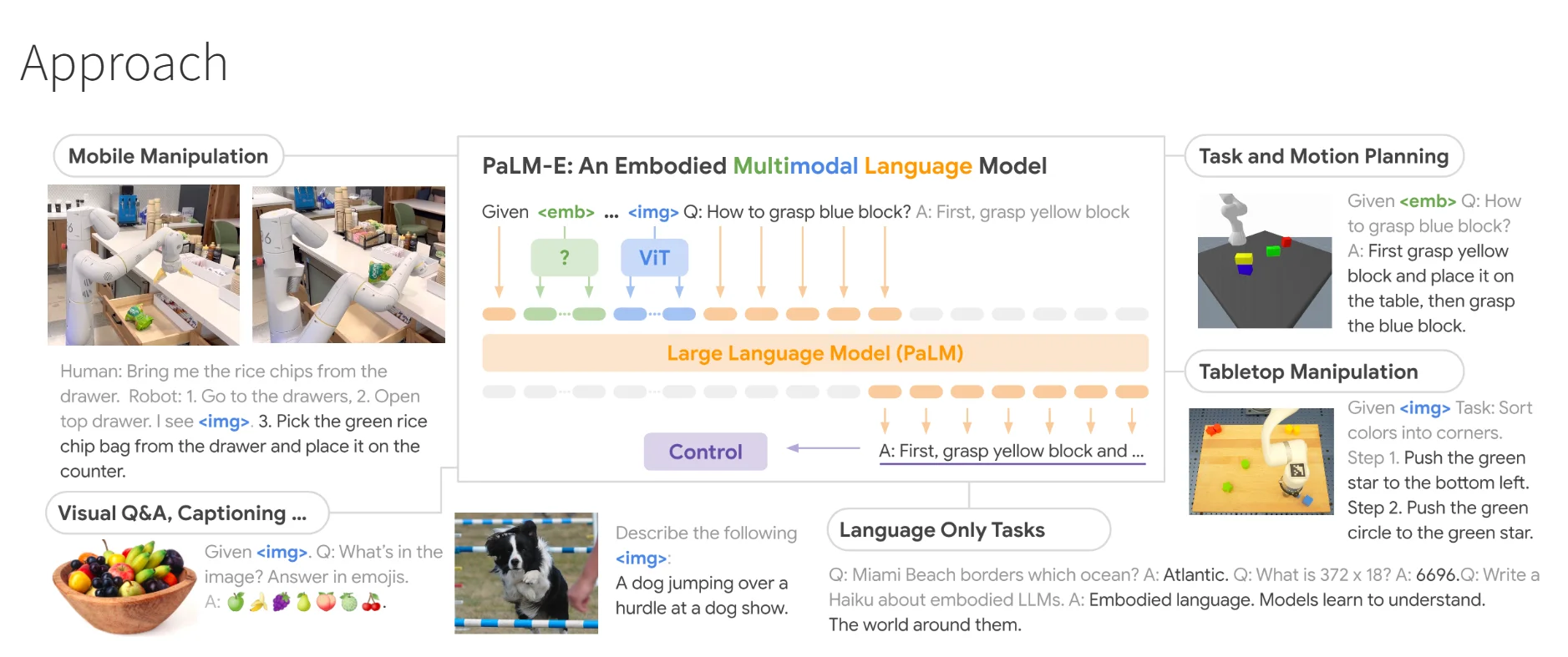

- Embodied Robotic Agents — Figure AI raised $675M; physical agents are entering commercial deployment in 2026

- Agentic Mesh Networks — Google's Agent2Agent protocol signals the emerging "internet of agents"

Let's get into it.

1. Computer-Use & GUI Agents — The Unlock Everyone Missed

Here's the number that changes everything: fewer than 15% of enterprise software applications have adequate external APIs. That means roughly 85% of the software that runs businesses today can only be automated through the graphical interface — the same way a human uses it.

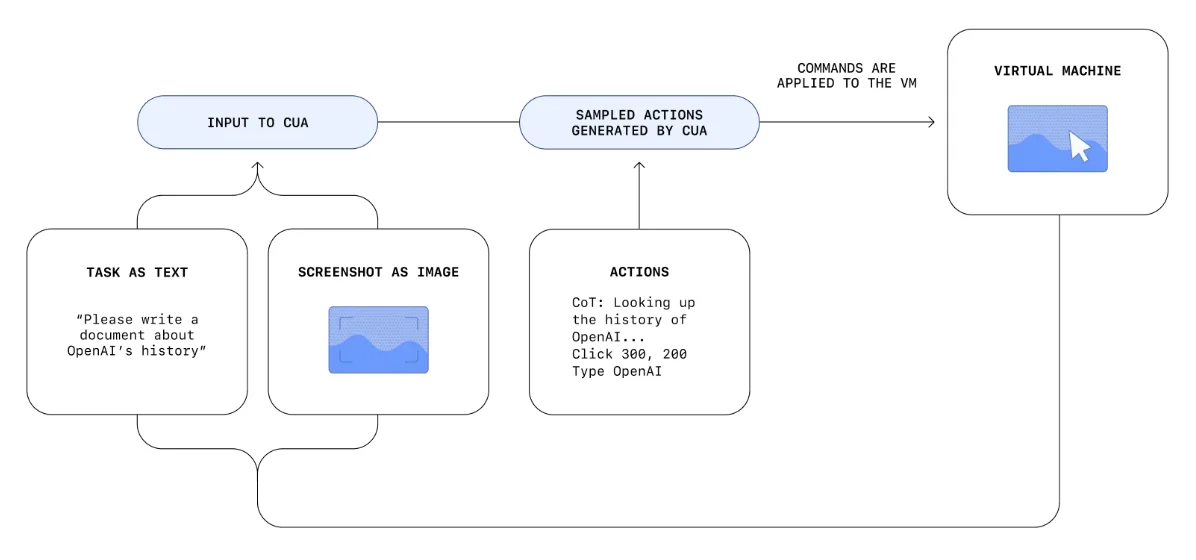

Computer-use agents solve this. Instead of waiting for vendors to build APIs, agents click buttons, fill forms, navigate menus, and operate software exactly as a human would.

Anthropic launched Computer Use in October 2024, letting Claude operate desktop applications, web browsers, and software tools by analyzing screenshots and taking actions. It was rough at launch — about 22% success on OSWorld benchmarks — but it proved the concept. OpenAI launched Operator in January 2025, their own computer-use agent, capable of booking travel, filing forms, and navigating complex multi-step web workflows autonomously.

The benchmark progress has been steep. OSWorld's public leaderboard tracks agent performance on real computer tasks. Models went from single-digit success rates in early 2024 to well over 30% by early 2025. Currently, Newer models like Claude Sonnet 4.6 and specialized agents like Coasty have pushed OSWorld success rates to 72–82%, effectively reaching or exceeding the human baseline of ~72%!

What's happening in the real world

Anthropic deployed Computer Use to enterprise customers in late 2024, with use cases including automated software testing, data entry across legacy systems, and multi-application research workflows. Early enterprise testers reported eliminating entire categories of manual data-entry work. However, Anthropic has been transparent that while powerful, it is still early and can be slower than traditional API integrations.

OpenAI's Operator was put to immediate commercial use in travel booking, expense processing, and government form submission. Unlike traditional RPA (Robotic Process Automation) tools, which require rigid scripts (e.g., "click pixel 200,400"), Operator uses vision-based reasoning. It "sees" a button labeled "Submit" and understands how to interact with it regardless of layout changes.

Microsoft integrated computer-use capabilities into Copilot Studio's Power Automate flows, letting enterprise users build agents that operate legacy Windows applications without API access — directly unlocking automation for SAP, Oracle, and decades-old internal tools. It allows "API-less" automation, meaning the AI interacts with the visual interface of old software that doesn't have modern cloud connections.

Where this is heading

2026 is the year computer-use agents move from impressive demo to production infrastructure. Every major RPA vendor — UiPath, Automation Anywhere, Blue Prism — is racing to rebrand as "AI agent" platforms. The incumbents understand what's at stake: an agent that can autonomously operate any software replaces years of custom scripting and $50,000-per-seat RPA licenses.

The companies that get ahead will build internal "agent operators" — not one agent, but fleets of them — each licensed to access specific systems, with audit trails that satisfy compliance requirements. The bottleneck isn't the technology anymore. It's governance.

2. Agentic Coding Is Eating Software Engineering

This might be the fastest-moving trend in all of tech right now. According to the 2024 Stack Overflow Developer Survey of over 65,000 developers, 76% are now using or planning to use AI tools in their development workflow — up from 44% the prior year. That's not gradual adoption. That's a category going vertical.

Cursor — an AI-native code editor — reached an estimated $500 million in annual recurring revenue less than two years after launch, and reportedly crossed $1 billion ARR by late 2025. GitHub's Octoverse 2024 report documented that developers using Copilot complete tasks up to 55% faster and are significantly more likely to finish what they start.

But here's what makes this an agent story specifically: the shift from AI autocomplete to AI that autonomously writes, tests, debugs, and ships code.

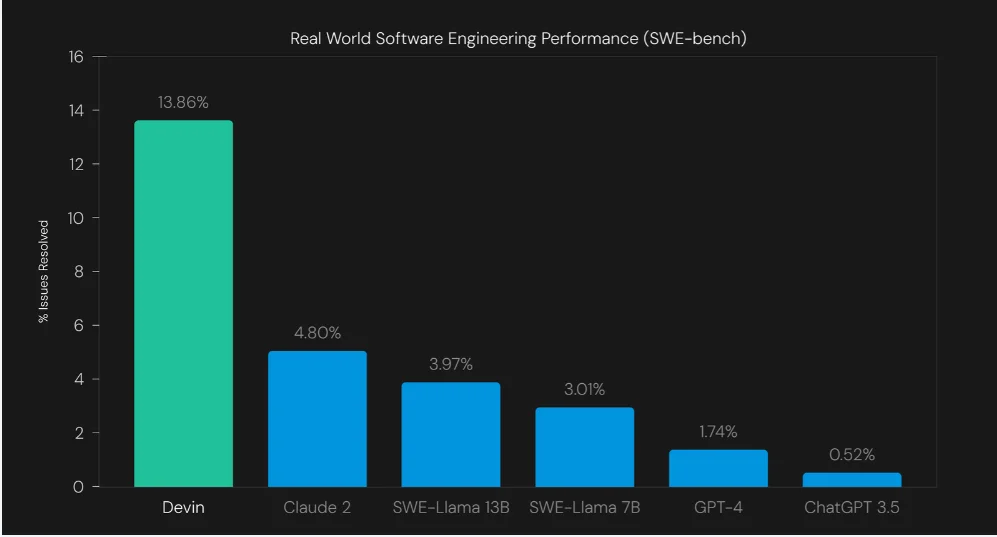

Cognition's Devin — the first AI software engineer — can spin up environments, search documentation, write entire features, fix failing tests, and open pull requests without human intervention at each step. Devin famously scored 13.86% on the SWE-bench (unassisted), far exceeding previous non-agentic models.

The job of a developer is shifting from "writing code" to "reviewing, directing, and orchestrating AI that writes code."

What's happening in the real world

GitHub's Copilot Workspace - launched in 2024, has evolved with a dedicated Agent Mode, transforming it into a powerhouse agentic environment. An engineer simply describes a task; Copilot independently drafts a plan, executes logic across the entire codebase, self-heals through test failures, and iterates — delivering a fully realized implementation before a human even touches the keyboard.

Cursor built an entirely new IDE philosophy around agents. Its Composer feature, powered by a dedicated Agent Mode, lets developers describe multi-file changes in plain English, with the agent understanding project context well enough to make coherent edits across an entire codebase — not just the open file. In late 2025/early 2026, Cursor launched custom Composer models specifically optimized for agentic tasks like reading file structures and applying precise, codebase-wide edits at speeds and costs that frontier models can’t match.

Anthropic ships Claude Code, a terminal-based coding agent with full filesystem access, git integration, and the ability to run tests, debug failures, and implement features end-to-end. It's already being used in production engineering teams to handle PR reviews, bug fixes, and exploratory refactors autonomously.

Where this is heading

The framing is shifting in 2026 from "AI that helps developers" to "AI that is the developer, supervised by humans." DOGE's (Department of Government Efficiency) widely-reported use of AI to rapidly audit and rewrite government code — in days rather than months — demonstrated what's possible when agents operate without the usual bureaucratic friction.

Right or wrong politically, it was a proof of concept the enterprise world took note of. The question every CTO is now asking isn't "should we use AI coding agents?" It's "how many human engineers do we need to supervise them?" For a broader look at how AI is reshaping the full developer toolchain — from TypeScript's rise to cloud-native infrastructure — see our software development trends for 2026.

3. Reasoning Models Become the Agent Backbone

Not all AI is created equal — and the gap between standard language models and reasoning models is becoming the most important distinction in the agent world.

Here's the benchmark that got everyone's attention: OpenAI's o3 model scored 87.5% on the ARC-AGI benchmark** — a test specifically designed to measure fluid intelligence and novel problem solving. The human baseline is 85%. Standard GPT-4-class models scored in the single digits on the same test. That's not an incremental improvement. That's a different category of capability.

The reason this matters for agents: most real-world tasks aren't pattern-matching exercises. They require multi-step planning, hypothesis testing, course correction, and handling situations the model has never seen before. Standard models trained to predict the next token hit walls fast on these tasks. Reasoning models — trained to "think before they speak" using extended chain-of-thought — navigate them.

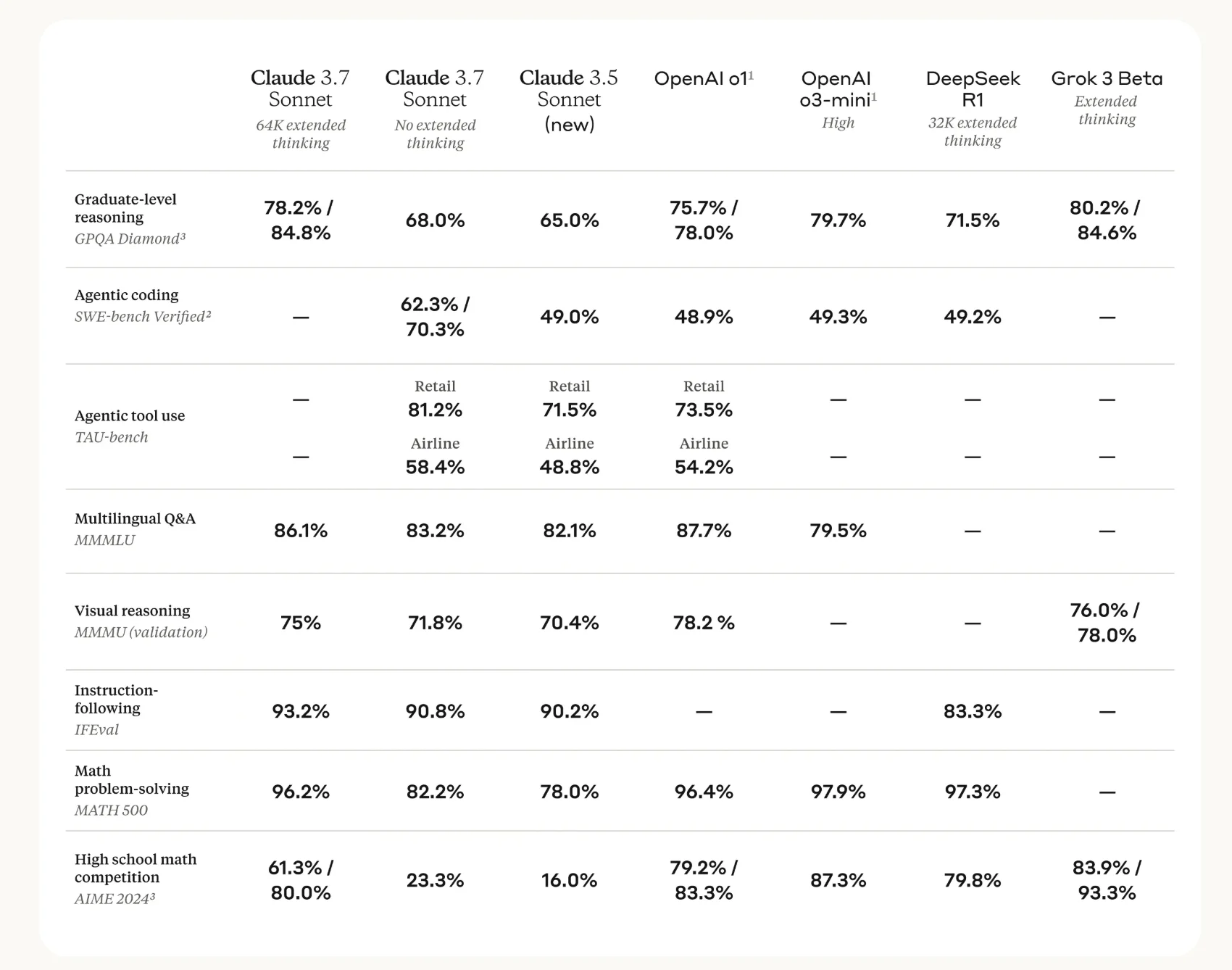

DeepSeek released R1 in January 2025 under an MIT license, bringing o1-class reasoning to the open-source world at a fraction of the cost. The implications for agent deployment economics are significant — enterprise teams no longer need frontier model API pricing to get reasoning-capable agents.

What's happening in the real world

OpenAI's o3 and o4-mini have become the default backbone for Operator and complex agentic tasks within the ChatGPT ecosystem. The reasoning capability means agents can handle multi-step customer service escalations, legal document analysis, and financial modeling workflows where a single wrong inference cascades into larger errors.

Anthropic's Claude with extended thinking mode allows agents to spend processing time on hard sub-tasks before committing to an action — particularly useful for coding agents where a wrong architectural decision early in a task is expensive to unwind.

DeepSeek R1, being open-source and deployable on-premise, has been rapidly adopted by enterprises with strict data residency requirements — particularly in finance, healthcare, and government — where sending data to external APIs is not an option. It's made reasoning-capable agentic deployment accessible to organizations that were previously locked out.

Where this is heading

Reasoning models are becoming the minimum viable backbone for any serious agent deployment in 2026. The question is no longer whether to use reasoning models but which ones, at what cost, and with what trade-offs between speed, accuracy, and price.

The emerging answer: use fast, cheap standard models for simple retrieval and routing tasks; reserve reasoning models for the decision nodes where errors are costly. Agent architectures are becoming more sophisticated about this split.

4. The Enterprise Digital Workforce — Agents Replacing Headcount

This is the business story of 2026 — and it's no longer speculative.

PwC's May 2025 survey of 300 senior executives found that 79% say AI agents are already being adopted in their companies. The ROI projections are extraordinary: organizations expect an average return of 171% on agentic AI deployments. U.S.-based executives expect even more — 192%.

McKinsey's 2025 State of AI report put concrete numbers on the deployment reality: 23% of organizations are already scaling agentic AI across their enterprise, with another 39% actively experimenting. That means 62% of organizations have at least one agentic AI program in motion right now.

The market numbers confirm the momentum. Grand View Research projects the global AI agents market to grow from $3.9 billion in 2023 to $117.5 billion by 2032 — a 49.6% CAGR. Gartner's August 2025 forecast set the target at 40% of enterprise applications featuring task-specific AI agents in 2026 — up from less than 5% in 2025, and already tracking ahead of schedule.

What's happening in the real world

Salesforce now positions Agentforce as "digital labor" rather than just a tool. Through its "Customer Zero" initiative, the company’s internal Agentforce deployment has handled over 1.5 million customer conversations with a 75%–84% autonomous resolution rate. This success has allowed Salesforce to reduce its human support headcount from 9,000 to 5,000, reallocating those resources toward sales and engineering.

Microsoft has embedded agents throughout Microsoft 365 Copilot, creating autonomous workflows that span Teams, Outlook, SharePoint, and Excel. Enterprise customers can now deploy agents that schedule across calendars, summarize meeting threads, draft responses, and escalate exceptions — operating as autonomous members of the team.

IKEA's AI assistant, Billie, successfully automated 47% of customer inquiries (approximately 3.2 million interactions). Instead of laying off the 8,500 employees whose roles became redundant, IKEA put them through an intensive training program to become remote interior design consultants - a move that has generated an additional $1.4 billion in revenue by turning "cost-center" support agents into "profit-center" sales experts.

Where this is heading

The conversation in boardrooms has shifted from "productivity enhancement" to "workforce transformation." CEOs are openly discussing agent-to-human ratios. The "one agent per person" framing Sam Altman has used — essentially a team of AI specialists available to every employee — is becoming the operational model at forward-leaning organizations. HR and legal teams are now directly involved in AI agent deployment decisions for the first time.

5. MCP & Protocol Standardization — The TCP/IP Moment

If you want to understand where the AI agent ecosystem is heading structurally, watch the protocols, not the models.

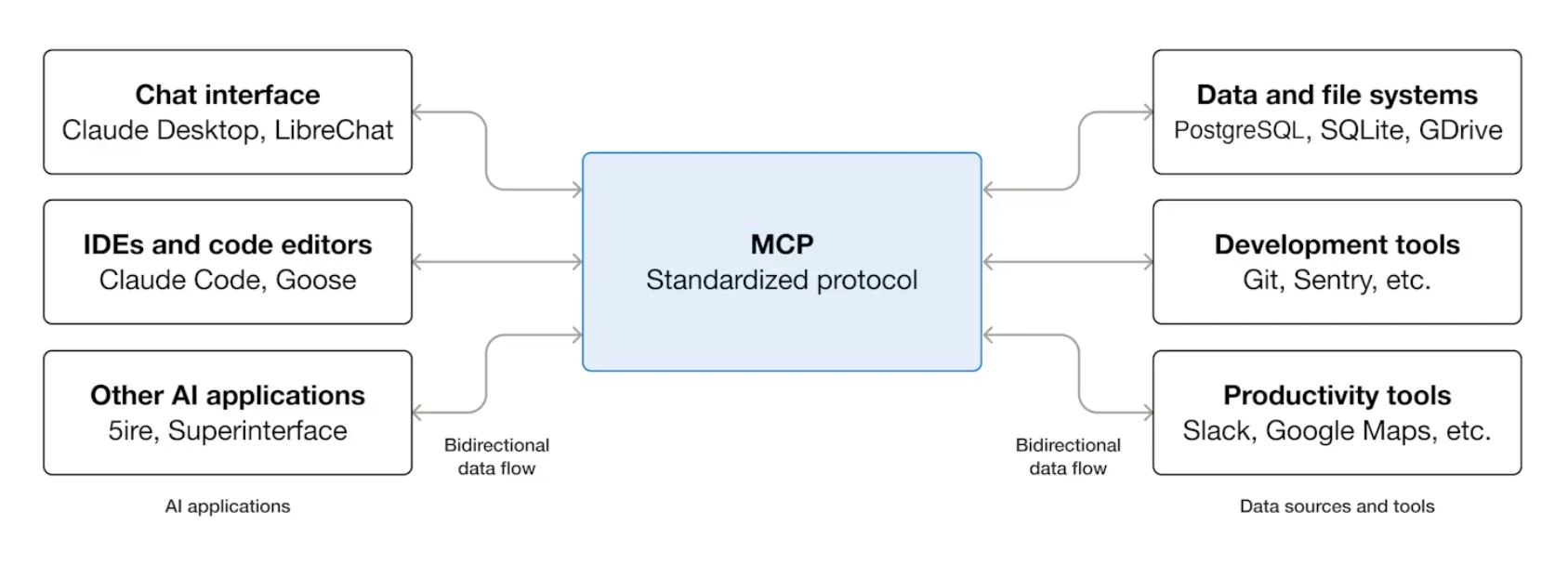

Anthropic released the Model Context Protocol (MCP) in November 2024 — an open standard for how AI agents connect to external data sources, tools, and APIs. Think of it as a universal adapter: instead of every AI company building custom integrations for every tool, MCP lets any agent connect to any MCP-compatible server using the same standard.

The adoption has been remarkable. Within months, hundreds of community-built MCP servers appeared — connecting agents to GitHub, Google Drive, Slack, Postgres, Brave Search, and dozens of other tools.

In March 2025, OpenAI announced native MCP support in their Agents SDK, followed by Google and Microsoft. When all three major AI labs adopt the same protocol, it's no longer experimental — it's infrastructure.

What's happening in the real world

Anthropic's open-source Model Context Protocol (MCP) has solidified its position as the industry standard for AI interoperability under Linux Foundation governance. With over 10,000 public servers, the GitHub reference implementation has matured into a massive ecosystem, providing a mature, cross-model framework for seamless integration with major enterprise tools, databases, and APIs.

Block (formerly Square) became a lead enterprise adopter of the MCP by co-founding the Agentic AI Foundation under the Linux Foundation. The company uses MCP to power Goose, an internal AI agent connected to over 60 custom servers. This ecosystem enables autonomous engineering workflows, including code refactoring, database migrations, and incident response, directly within Block's internal infrastructure.

Microsoft has adopted the MCP as a primary integration standard for Windows AI Foundry and Microsoft 365 Copilot. This moves beyond experimental support to provide a unified framework for AI agents to securely access disparate data sources. By minimizing custom coding, MCP streamlines the flow of enterprise information, transforming fragmented data into more accessible and 'liquid' strategic assets.

Where this is heading

The protocol wars are effectively over — MCP won the first round. But 2026 brings a more complex challenge: agent-to-agent communication. Google announced the Agent2Agent (A2A) protocol in April 2025 — a standard for how agents talk to each other, not just to tools. When an orchestrator agent needs to delegate a task to a specialist agent from a different vendor, A2A defines how that handoff happens.

The combination of MCP (agent-to-tool) and A2A (agent-to-agent) is laying the actual infrastructure for multi-vendor agentic pipelines.

6. Multiagent Orchestration — Teams of Agents Replace Single Agents

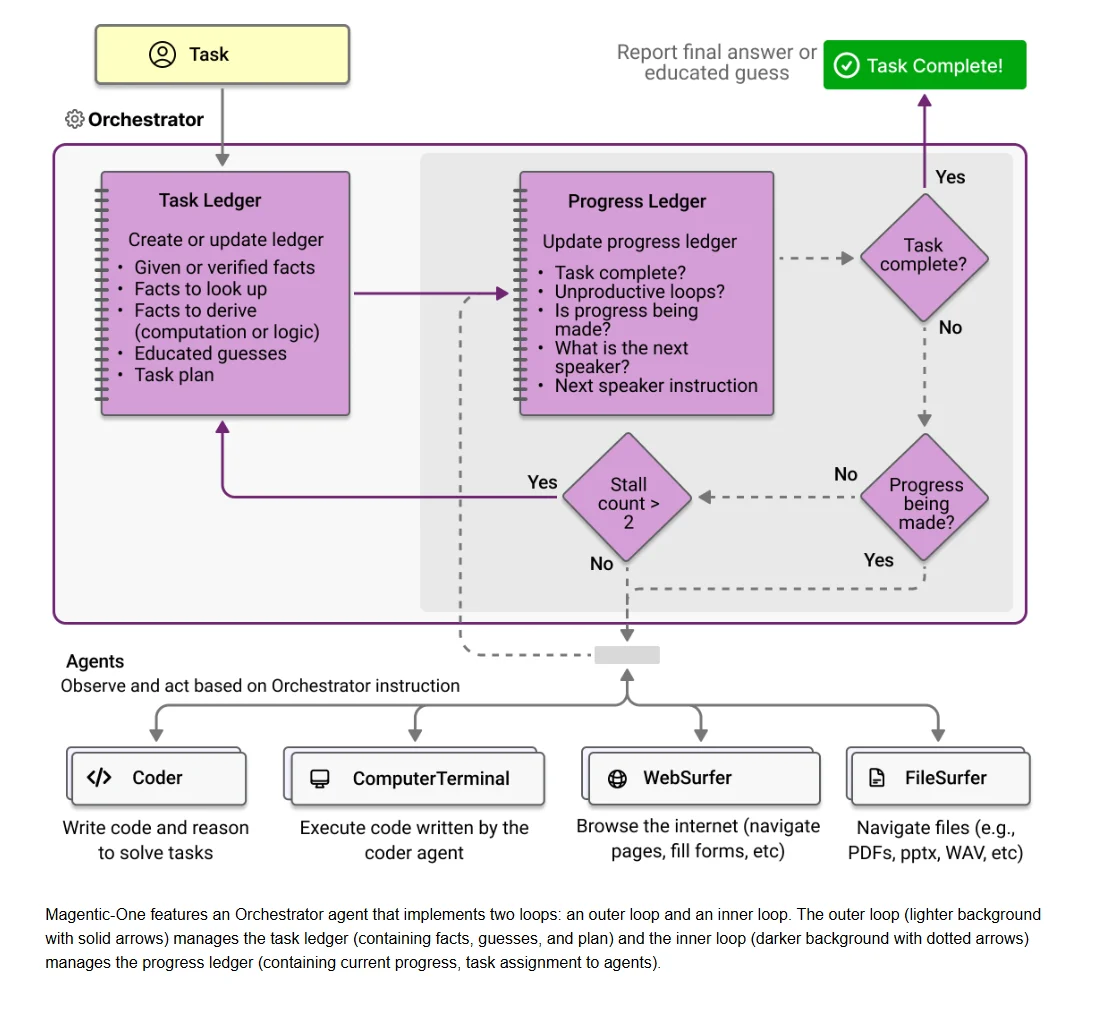

Single agents are hitting their ceiling. The most important architectural shift in 2026 is the move to multiagent systems — coordinated teams of specialized agents where each handles a specific domain, and an orchestrator agent manages the workflow.

Microsoft Research papers highlight that production AI deployments using multiple specialized models consistently outperformed single-model approaches on complex tasks — with better reliability, lower latency, and easier debugging. The industry has noticed.

Since its 2023 debut, Microsoft’s AutoGen (now evolved into the Microsoft Agent Framework and AG2) has become a GitHub powerhouse by pioneering multi-agent orchestration. As one of the most starred AI projects on GitHub, the framework replaces single-prompt AI with collaborative teams: while one agent codes, others simultaneously review for security, run tests, and write documentation.

This "mixture of agents" approach consistently delivers more reliable, high-quality results than any single model working alone.

What's happening in the real world

LangChain's LangGraph framework — which models agent workflows as directed graphs — became the dominant production orchestration layer for complex agentic pipelines. Enterprises use it to build workflows where agents branch, loop, and conditionally escalate to human review when confidence falls below a threshold.

CrewAI took a more intuitive approach: define agents by role (researcher, writer, editor), assign them goals, and let them collaborate. CrewAI's open-source framework accumulated over 25,000 GitHub stars within months of launch and is powering production content pipelines, market research workflows, and customer support escalation systems.

Anthropic's internal research on multiagent systems found that agents are significantly more effective at catching each other's errors than catching their own — a finding that reinforces the case for orchestration over single-agent deployment. Their guidance on building effective agents is now widely cited as a production reference for enterprise teams.

Where this is heading

The "just use one powerful agent" approach is giving way to "right agent for the right job" architecture. In 2026, enterprise agent deployments look less like a single super-assistant and more like an org chart — with specialized agents for research, analysis, writing, QA, compliance review, and human escalation, all coordinated by an orchestrator.

The teams that figure out how to build, monitor, and maintain these agent organizations will have a substantial operational advantage.

7. Vertical Domain Agents — The Specialists Are Winning

Here's what's becoming clear: general-purpose AI agents are losing to specialists trained natively on domain data, workflows, and regulatory environments. Not because the general models are bad — but because the specialists are dramatically better at the narrow tasks that matter most in regulated industries.

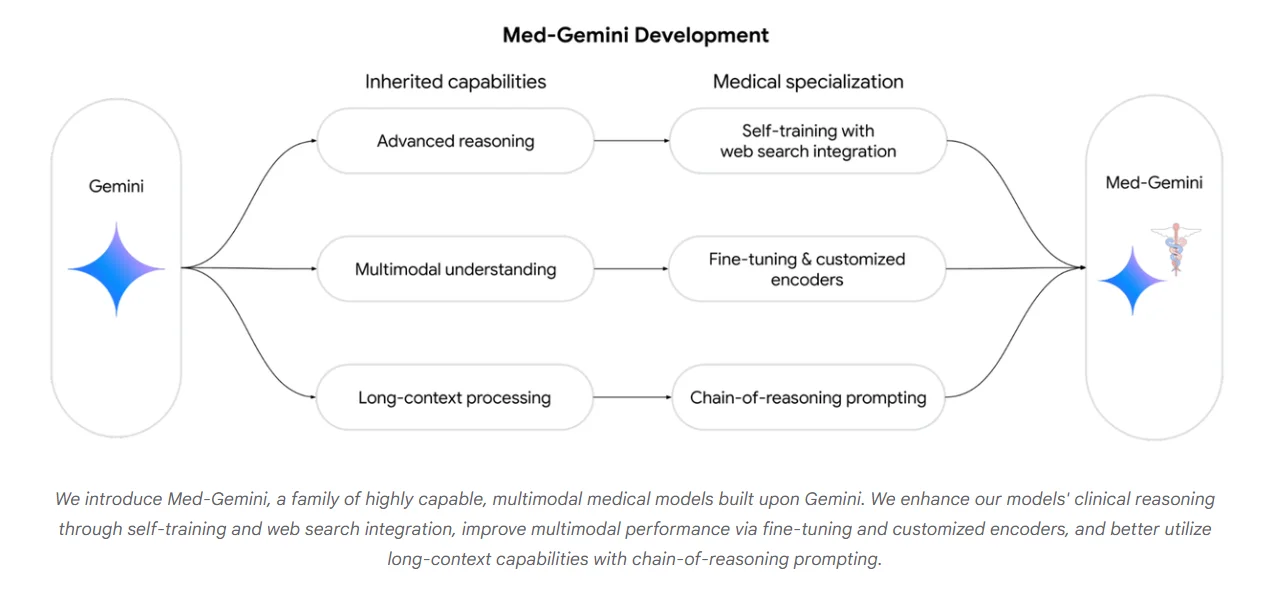

A 2025 study published in Nature Medicine found that specialized medical AI models trained on clinical data — most notably Med-Gemini (Google) and AMIE (Google) — significantly outperformed GPT-4 in clinical reasoning and safety metrics, specifically reducing hallucinations in high-stakes areas like drug interactions and contraindications. Gartner predicts that by 2028, over 50% of enterprise GenAI deployments will use domain-specific language models, up from less than 5% in 2023.

The moat in this market isn't the model. It's the training data flywheel. Every interaction with a legal AI makes it better at legal tasks — and a general-purpose competitor can't replicate that without the same proprietary legal data.

What's happening in the real world

Harvey AI - a platform designed to develop legal-specific AI models trained on verified case law, statutes, and regulatory documents - recently raised a massive $200 million growth round at an $11 billion valuation. It's deployed at Allen & Overy, PwC Legal, and the US Air Force JAG Corps. Harvey doesn't just draft — it cites actual verifiable case law with the accuracy that general models can't reliably match.

Abridge raised $150 million to deploy AI that listens to doctor-patient conversations and autonomously generates clinical notes — trained specifically on medical dialogue, HIPAA-compliant terminology, and EHR integration. Saving physicians an estimated 2-3 hours per day on documentation, they are integrated into Epic (the largest EHR provider) and used by systems like Mayo Clinic, Emory, and UPMC.

Kensho (a subsidiary of S&P Global) built financial AI trained on decades of market data, earnings transcripts, and regulatory filings. Their agents analyze financial documents and generate structured data outputs that power institutional investment workflows — with the accuracy requirements that general models fail at when handling options chains, derivative pricing, or SEC filing language.

Where this is heading

The vertical agent market is becoming winner-take-most within each domain. The company that owns the best legal training data wins legal AI. The company with the most clinical conversation data wins healthcare AI. Data accumulation — not compute or model architecture — is becoming the primary competitive moat.

Expect significant M&A in 2026 as large enterprises acquire vertical AI companies to lock in their data advantages. For how this plays out in financial services specifically — where AI-powered fraud detection, algorithmic trading, and compliance automation are transforming the industry — see our fintech trends for 2026.

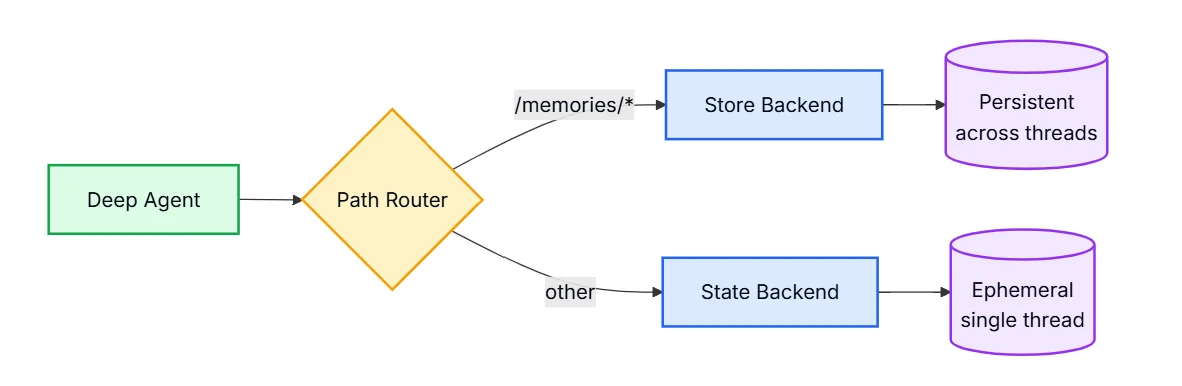

8. Persistent Memory — Agents That Actually Know You

Current AI agents have a fundamental problem: they forget. Every new conversation starts from zero. No memory of your preferences, your history, your prior decisions. It's the equivalent of hiring a brilliant assistant who gets amnesia every night.

The persistent memory layer is being built — and it's creating massive lock-in dynamics.

OpenAI launched persistent memory for ChatGPT Plus users in 2024, allowing the model to remember facts across sessions. Users immediately reported dramatically improved utility — the agent stopped asking the same onboarding questions, started anticipating preferences, and provided contextually appropriate responses. Adoption of the feature was described internally as "faster than any previous feature rollout."

Mem0 — an open-source memory infrastructure project — has accumulated over 50,000 GitHub stars, becoming one of the fastest-growing AI infrastructure repositories in history. The fact that the developer community moved this fast on memory tooling signals how acutely the statelessness problem is felt.

What's happening in the real world

Google has introduced a 'Memory Import' feature for Gemini, allowing users to upload their chat history and "memories" from ChatGPT (via ZIP files) so they don't have to start from zero when switching platforms. This confirms the lock-in dynamics: memory is so valuable that it is now a competitive tool used to lure users from one ecosystem to another.

Letta (formerly MemGPT) built an open-source framework specifically for long-term memory management in agents, using a hierarchical memory architecture that mirrors human memory systems — moving less-accessed memories to "archival storage" while keeping recent context in active working memory. This allows agents to handle long-running projects that span weeks.

Zed Industries integrated persistent context into their AI coding assistant, allowing it to remember your project's architectural decisions, coding conventions, and past bug patterns — meaning the agent gets genuinely more useful the longer you use it on a specific codebase.

Where this is heading

Persistent memory is the mechanism by which AI agents become irreplaceable. An agent that has two years of your context, preferences, and history is genuinely harder to switch away from than a commodity model.

In 2026, the memory layer is becoming a key battleground — every major AI platform is racing to accumulate the richest, most useful memory of their users. For enterprises, this raises serious data governance questions: who owns the agent's memory? What happens to it if you switch providers? These questions will drive significant procurement and legal debate this year.

9. Embodied Robotic Agents — AI Gets a Body

The convergence of large language models and physical robotics is happening faster than most predicted — and the funding numbers reflect it.

Figure AI, which raised $675 million at a $2.6 billion valuation in early 2024, has since exploded to a $39 billion valuation following its 2025 Series C. This massive capital influx from investors like Microsoft, NVIDIA, Intel and OpenAI has shifted humanoid robots from research prototypes to active industrial deployments. These agents now run on advanced Vision-Language-Action "brains," allowing them to generalize across complex manufacturing and warehouse tasks without the need for manual, task-specific programming.

Physical Intelligence (π) has evolved from its initial $400 million launch into a decacorn-valued leader in embodied AI, currently seeking a $1 billion round at an $11 billion+ valuation. Its flagship foundation model, (π₀) has been superseded by versions like (π₀.₅) and (π₀.₆), which now support open-world generalization and long-horizon memory (MEM). These advancements allow a single model to perform complex, 10-minute+ tasks across entirely new environments — ranging from household chores to industrial logistics — without any site-specific programming.

That's the breakthrough: generalization. Previous industrial robots required custom programming for every new task. Embodied agents powered by foundation models learn new tasks from a handful of demonstrations, the same way a human does.

What's happening in the real world

Figure AI signed a commercial deployment agreement with BMW to operate humanoid robots on the manufacturing floor in South Carolina — the first commercial humanoid deployment at a major automotive manufacturer. Their Figure 02 model has already completed a successful 10-month stint on the active assembly line, contributing to the production of over 30,000 BMW X3 vehicles.

1X Technologies (formerly Halodi Robotics) raised $100 million to build humanoid robots specifically designed for service environments — security, retail, and logistics. Their primary robot, EVE, is currently deployed in professional settings like security (guarding) and logistics.

Boston Dynamics transitioned Atlas from hydraulic to electric in 2024, integrating AI foundation model control alongside their mechanical engineering. The new Atlas is faster, more dexterous, and can be updated with new skills via software — a fundamental shift from the programmed-motion model of industrial robotics.

Where this is heading

2026 is the year embodied AI moves from impressive demonstration to measured commercial deployment. The initial beachhead applications are clear: warehouse picking, last-mile logistics, and manufacturing assembly assist — environments that are structured enough for current capabilities but physically demanding enough that labor economics favor automation.

The harder environments — construction, agriculture, elder care — are 3-5 years out. But the companies building the underlying foundation models for physical manipulation today are establishing the data flywheels that will dominate those markets when the hardware matures.

10. Agentic Mesh Networks — The Internet of Agents

This is where all the other trends converge into something genuinely new. The emergence of agent-to-agent communication protocols — combined with MCP, reasoning models, and multiagent orchestration — is creating the conditions for networks of agents that dynamically recruit, delegate to, and negotiate with each other at runtime.

This isn't a research concept anymore - it is the industry standard.

Agent2Agent (A2A), introduced by Google in April 2025 and now maintained as an open-source project under the Linux Foundation, provides the formal specification for how agents from different vendors discover capabilities, assign tasks, and report results. With over 50 foundational partners — including Salesforce, SAP, Workday, and ServiceNow — adopting it since launch, the enterprise software ecosystem has successfully standardized inter-agent communication.

The analogy that holds: MCP is HTTP (standardizing how agents access resources and tools), and A2A is TCP (standardizing how agents coordinate with each other). Together, they form the established network stack for the web of agents.

What's happening in the real world

Google launched A2A with immediate production integrations for Agentspace, their enterprise agent platform — allowing a single orchestrator agent to dynamically delegate research tasks to a Salesforce agent, financial analysis to a SAP agent, and HR queries to a Workday agent, all within a single user request.

Salesforce joined the A2A ecosystem on day one, and ended up creating the first commercially-deployed examples of cross-vendor agent collaboration in enterprise workflows. Its Agentforce platform is fully A2A-compliant, allowing a Salesforce Service Agent to automatically delegate a specialized task (like a complex refund calculation) to a financial agent in another system (and vice versa), without custom API coding.

LangGraph's distributed execution model allows individual agents in a graph to run on separate infrastructure — meaning a complex agentic workflow can span cloud providers, on-premise systems, and third-party APIs, with the orchestration layer handling coordination transparently.

Where this is heading

The agentic mesh is still early — today it's about protocol adoption and initial integrations. But the trajectory leads somewhere profound: a world where any task can be automatically decomposed, routed to the best available specialized agent (regardless of vendor), executed, and verified — all without a human designing the workflow in advance.

The enterprises that build strong inter-agent governance frameworks now — defining what their agents can delegate, what data they can share, and what actions require human approval — will be positioned to take full advantage as the ecosystem matures through 2026 and 2027.

The Common Thread

Look across all ten of these trends and one pattern dominates: AI agents are crossing the threshold from tools that help humans do work to autonomous entities that do work on behalf of humans.

That threshold crossing is happening at different speeds in different domains. In software engineering, it's happening now — today's coding agents are already handling entire features and PR cycles with light human oversight. In enterprise knowledge work, it's happening through platforms like Agentforce and Copilot as companies deploy agents to handle defined workflows at scale. In physical work, it's happening in controlled commercial environments with clear ROI. In fully autonomous coordination between agents from different vendors — the agentic mesh — it's in early infrastructure stages.

What's common across all of them is the investment signal. The capital flowing into agent infrastructure — protocols, memory layers, orchestration frameworks, embodied hardware — reflects a collective bet that the agent paradigm isn't an incremental improvement on existing software. It's a different category.

The practical implication for anyone building products or running organizations: the question is no longer whether to engage with agents, but which bets to make now versus which to wait on. Computer-use agents, agentic coding, and enterprise digital workforce deployments are live bets with measurable ROI today. Agentic mesh networks and persistent memory lock-in are 12-24 month bets worth positioning for. Embodied robotics, for most organizations, is a 3-5 year horizon.

The companies that will look back at 2026 as a pivot point won't necessarily be the ones who moved fastest. They'll be the ones who read the actual deployment data, made the right bets at the right time, and built the governance infrastructure that let them scale agents without blowing up when something went wrong. The technology is moving faster than the organizational readiness. The bottleneck — as always — is execution.

Want to spot emerging AI trends before they hit mainstream? Check out our guide on how to identify market trends or explore what's gaining traction on our trends dashboard.